In the ever-evolving landscape of artificial intelligence, efficient resource management remains a cornerstone of successful operations. Nvidia has made a striking move by open-sourcing the KAI Scheduler, an advanced GPU scheduling solution designed to integrate seamlessly with Kubernetes. This initiative not only demonstrates Nvidia’s adaptability but also highlights its commitment to fostering innovation in the open-source community. The KAI Scheduler, now freely available under the Apache 2.0 license, aims to redefine how IT and machine learning (ML) teams manage their resources, especially in environments where GPU demands can fluctuate drastically.

Nvidia’s decision to open-source such a sophisticated tool signals a paradigm shift; it invites collaboration and contribution from a broader range of developers, thereby enriching the ecosystem. By placing the KAI Scheduler in the hands of the community, Nvidia empowers organizations to harness cutting-edge technology while simultaneously driving advancements in AI infrastructure that are beneficial for enterprises of all sizes.

Meeting the Challenges of AI Workloads

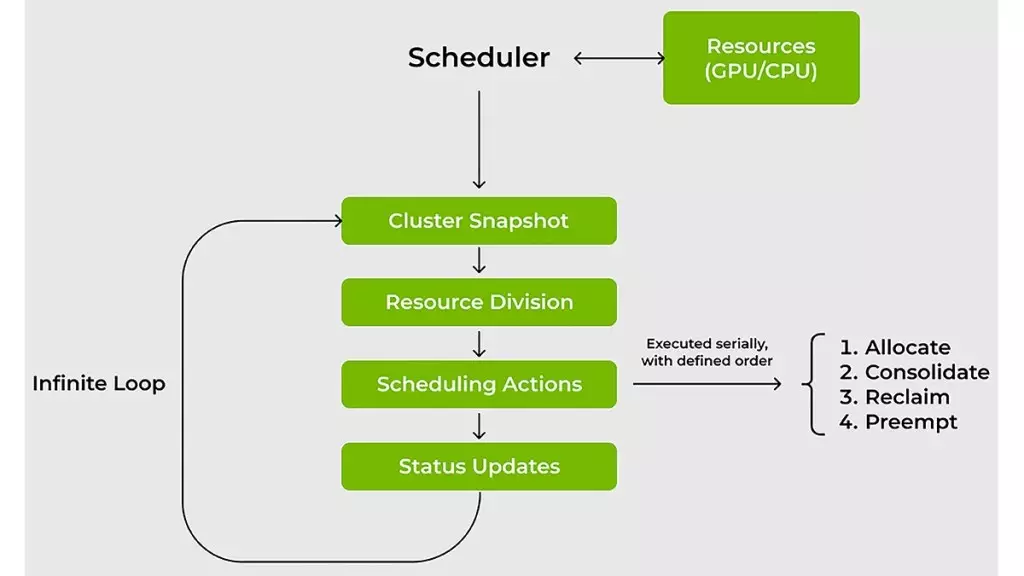

AI workloads are notorious for their variability, requiring organizations to adapt their resource management strategies to accommodate rapid changes. Traditional resource schedulers falter under these conditions, often leading to inefficiencies and wasted computational power. The KAI Scheduler addresses these challenges head-on. It continuously recalibrates resource allocations based on real-time analysis of workload demands, effectively tackling issues related to GPU allocation and compute access. This adaptability is not just advantageous; it is essential for modern AI development where speed is of the essence.

Nvidia’s schedule leverages innovative concepts such as gang scheduling and hierarchical queuing, allowing teams to submit multiple jobs in batches without constant monitoring. This flexibility drastically reduces wait times, enabling engineers to focus on innovation rather than logistics. The scheduler’s ability to dynamically adjust quotas and limits in response to workload changes means that ML teams can trust that resources are allocated fairly and efficiently, which is a game-changer in a field where time is often the most critical resource.

Innovative Resource Management Strategies

The KAI Scheduler employs two key strategies—bin-packing and spreading—to optimize resource usage. Bin-packing maximizes compute utilization by intelligently combining smaller tasks onto underutilized GPUs and CPUs, thereby combating resource fragmentation. This is crucial in maximizing efficiency within shared clusters, where resources are often underutilized due to misallocation.

On the other hand, spreading ensures that workloads are evenly distributed across all available resources. This dual-pronged approach prevents bottlenecks from occurring at any single point in the network, enhancing overall operational transparency and efficiency. Researchers and data scientists will find that these methods not only enhance performance but also help in maintaining a steady flow of productivity, which is vital in competitive AI environments.

Building Bridges Between AI Frameworks

Another hallmark of the KAI Scheduler is its ability to simplify the often-complex integration with various AI frameworks such as Kubeflow and Ray. In the past, teams faced hurdles in aligning their workloads with the necessary tools, which often involved laborious manual configurations. The KAI Scheduler features an intelligent podgrouper that automatically detects and integrates with these frameworks, slashing the time spent on setup and allowing teams to dive straight into development. This level of convenience accelerates the prototyping process, ensuring that innovation is not stifled by administrative complexities.

Encouraging collaboration not only enhances individual projects but also spurs an overall culture of shared knowledge. By simplifying these processes, Nvidia is taking significant steps to foster a collaborative environment where creativity and collaboration can flourish, ultimately pushing the boundaries of what is possible in AI.

Nvidia’s KAI Scheduler serves as a powerful catalyst for change in the AI landscape. By open-sourcing this innovative tool, Nvidia demonstrates a commitment to enhancing operational efficiency through collaboration, effectively enabling organizations to seize the full potential of their GPU resources. With its focus on real-time adaptability and seamless integration, the KAI Scheduler is set to become a cornerstone in the management of AI workloads, empowering teams and accelerating the pace of innovation.