In the age of technology, the quest for superior food quality assessment is evolving rapidly. Imagine standing in front of a pile of apples, determining which are the ripest and freshest, and contemplating if there’s a smartphone application to guide your selection. This scenario illustrates the intersection of common consumer dilemmas and advanced machine learning methodologies. Although current machine-learning models demonstrate promise, they lack the adaptability and precision that human observers possess when evaluating food quality under varying environmental conditions. However, recent findings from the Arkansas Agricultural Experiment Station hint at future developments that could integrate human input with artificial intelligence to elevate our understanding of food quality.

Led by Dongyi Wang, an assistant professor devoted to the advancement of smart agriculture, the study recently published in the Journal of Food Engineering reveals significant insights into how machine learning can be augmented. Wang’s research team set out to analyze human perceptions of food quality, demonstrating that while human evaluations can be altered based on lighting (a perceptual phenomenon referred to as “temporal” bias), machine learning models can also learn to predict food quality more consistently if trained with data reflecting these variables.

Traditional methods relying solely on artificial intelligence fail to account for the subjectivity involved in human sensory evaluations. Wang expressed an essential observation: “Understanding human perception is critical in developing more sophisticated machine-learning models.” Indeed, by incorporating human sensory data—gathered from experiments evaluating food images under various lighting conditions—these advanced models can enhance their predictive accuracy.

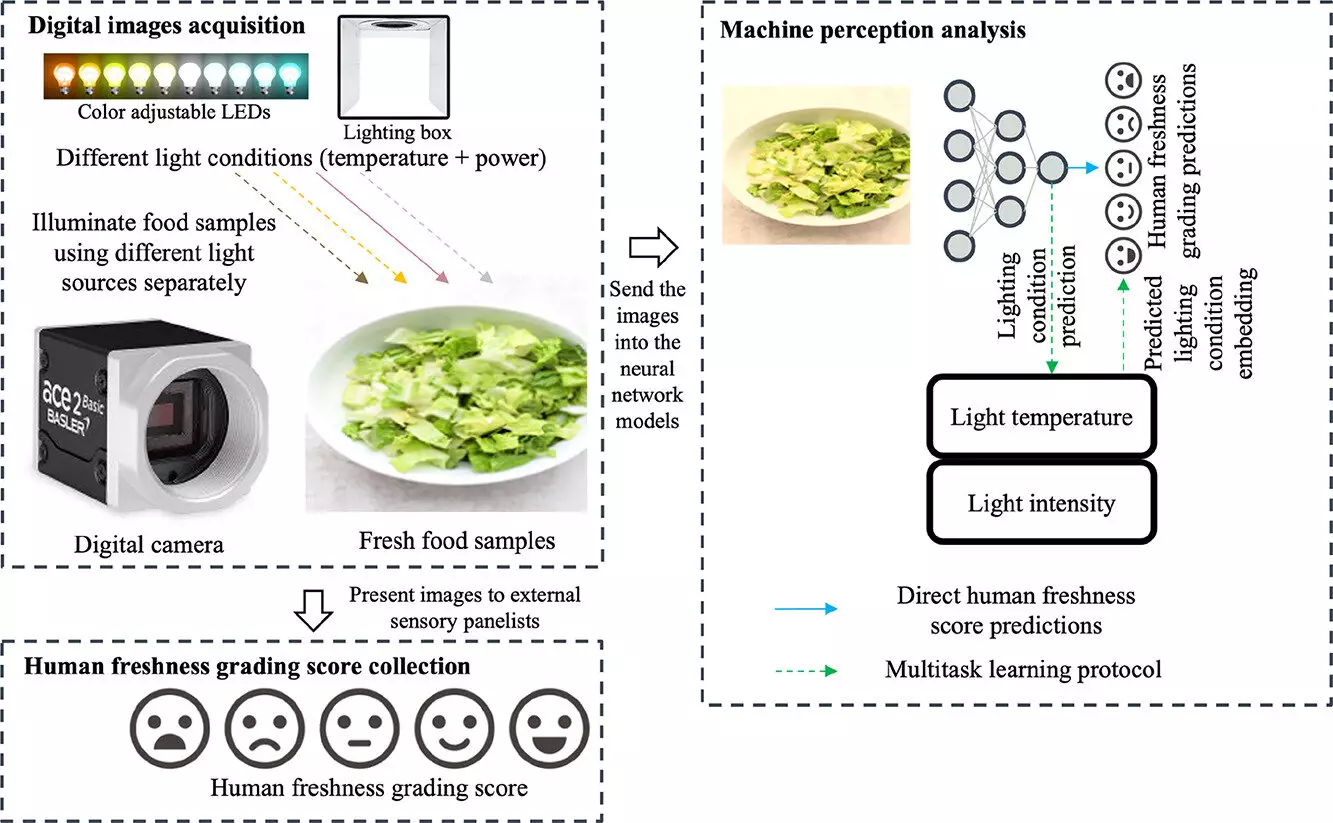

Central to the study’s findings is the meticulous experimental design. Researchers employed Romaine lettuce as a test subject and gathered data through a sensory panel consisting of a diverse group of 109 participants. Over five days, participants evaluated the freshness of 75 images daily, totaling 675 images of lettuce photographed under different color tones and brightness levels. The rigorous evaluation process ensured that all participants had normal vision, mitigating potential biases from color perception anomalies.

This comprehensive approach allowed the researchers to encapsulate a broad spectrum of human perceptions, essentially benchmarking the machine-learning models against human evaluations. By training various neural network models on this data, the researchers created algorithms designed to reflect not only static visual information but also the dynamic nature of human perception.

The study yielded remarkable results, demonstrating that machine prediction errors could be reduced by 20% when incorporating varied human perspectives influenced by lighting conditions. Such an improvement signifies a potential upheaval in the way machine learning models are constructed and employed across multiple domains—from food evaluation to other sectors such as jewelry assessment, where appearance is paramount.

It’s critical to note that traditional machine vision systems often rely on basic color information or “human-labeled ground truths.” Wang’s work highlighted a significant oversight in these models: they frequently ignore the impact of illumination variations and their consequential biases on human judgment and decision-making. Thus, the implications of this research extend far beyond food quality; they challenge the foundational methodologies employed in machine learning and computer vision.

The ramifications of this research are multifaceted and could revolutionize various industries where visual quality assessment is crucial. Industries from retail to hospitality can benefit from advanced technologies that merge human sensory data with machine learning insights. For instance, grocery stores may utilize these findings to enhance product presentation strategies, ensuring that customers are provided with visually appealing items that meet high-quality standards.

Moreover, this study opens the door for further research in how consumer preferences might shift, encouraging tailored marketing strategies that cater to the visual appeal of products influenced by environmental factors. The potential for customized algorithms that adapt to changing consumer tastes could also emerge as a significant trend, particularly as advancements in artificial intelligence continue to evolve.

As we progress into an era dominated by artificial intelligence, the fusion of human perception and machine learning in food quality assessment symbolizes a broader trend towards innovation in various sectors. Future studies will undoubtedly explore the breadth of this research, pushing the boundaries of how we understand and leverage human insights within technological frameworks. In this brave new world, technology does not replace human observation—it complements and enhances it, creating systems that are more robust, accurate, and aligned with consumer expectations. Ultimately, this collaboration may lead to more holistic approaches in quality assessment, benefiting both producers and consumers alike.